AI-generated images and videos pose a threat to democratic processes and undermine trust within society. Researchers at ETH Zurich have now developed chip technology that enables verification of the authenticity of sensor data including images or videos.

Artificial intelligence (AI) now makes it alarmingly easy to manipulate photos, videos and audio recordings. Whether it is fabricated statements attributed to politicians or misleading images from crisis zones, social media and online platforms are already flooded with so-called deepfakes. The consequences for society and democracy are serious: an increasing number of people are being deceived by such forgeries or are beginning to mistrust even credible sources.

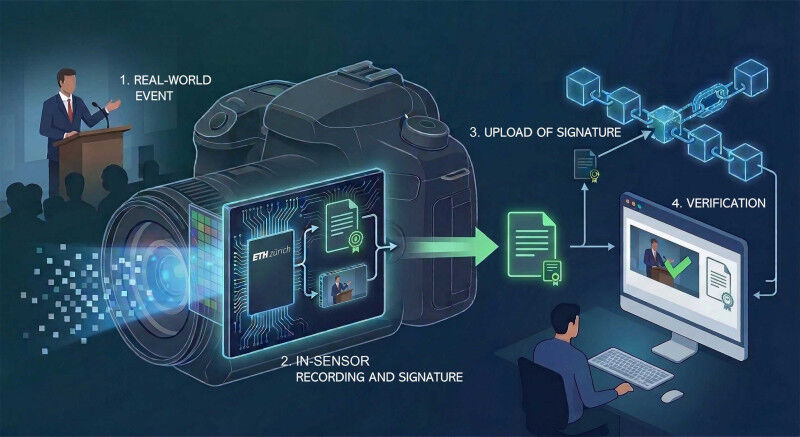

Researchers at ETH Zurich have developed a new sensor technology that directly addresses this issue. The concept involves cryptographically signing images, videos or audio signals within a sensor chip at the exact moment they are captured. This signature allows for verification that the data genuinely originate from a camera or recording device, indicates when it was captured, and ensures that it has not been tampered with. "If data is signed the moment it is captured, any later manipulation leaves traces," explains Fernando Cardes, who co-developed the technology. He is a research associate at Andreas Hierlemann’s Professorship of Biosystems Engineering within the Department of Biosystems Science and Engineering (BSSE) in Basel. "To manipulate the data, the chip would have to be physically attacked, requiring a massive technological effort so that the mass generation of manipulated content for social media platforms would be practically impossible," he adds.

Simple verification thanks to a public register

The signatures generated by the sensor could be stored by camera manufacturers in a publicly accessible, immutable ledger (e.g., a blockchain). This approach would enable anyone to verify the authenticity of the data in question at any time by comparing the chip’s signature stored in the ledger with the original data and confirming its source. "As such, it is barely of any relevance whether a person or the technology involved in data processing and transmission is trustworthy," explains Felix Franke, who co-developed the chip at ETH Zurich and is now a professor at the University of Basel. "Trust in digital content is eroding. We wanted to create a technology that gives people a way to verify whether something is genuine."

In principle, the technology can be incorporated into any type of sensor or camera. In the future, social media platforms could automatically verify whether the content is genuine as soon as it is uploaded. Where this does not happen, journalists, researchers or public authorities could authenticate content themselves using simple tools.

The threat of deepfakes identified at an early stage

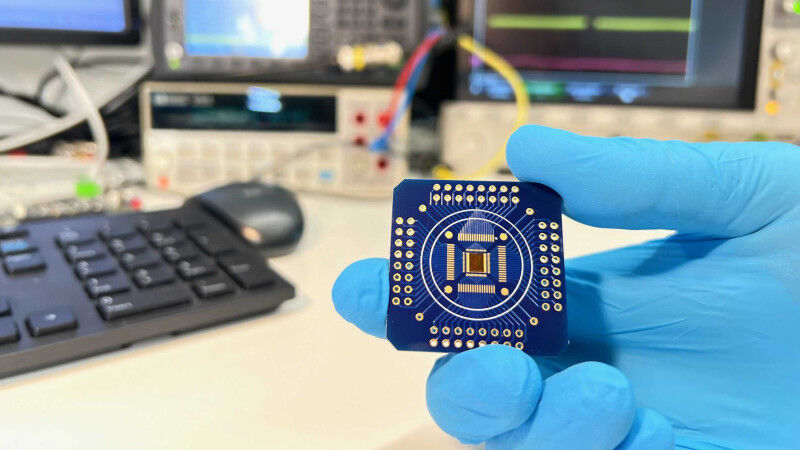

The idea behind the sensor chips originated as a side project at the Bio Engineering Laboratory at ETH Zurich. Long before AI systems like ChatGPT became a subject of public debate, the laboratory had been working on highly sensitive sensors to measure electrical signals from living cells. The interdisciplinary team also had the necessary expertise to incorporate additional cryptographic functions directly into the sensor chips. "The danger posed by deepfakes was foreseeable," recalls Franke. Therefore, as early as 2017, the plan was devised to develop a sensor the data of which could not be manipulated without detection.

The chip described in a paper published today is a working prototype and demonstrates technical feasibility. Further steps are still required prior to commercial deployment. Nevertheless, the researchers are confident that, with current technologies and processes, the chip can be developed into a working, market-ready product. They have therefore filed a patent application. "We are currently exploring how to reduce costs for camera and sensor manufacturers should they wish to incorporate the new technology into their chips," reports Cardes.

Reference

Cardes F, Bürgel S, Yuan X, Yu Q, Rubino A, Lee J, Bounik R, Viswam V, Hierlemann H, Franke F. In-sensor cryptographic signature generation for linking a physical process and an immutable digital entity. Nature Electronics 2026,