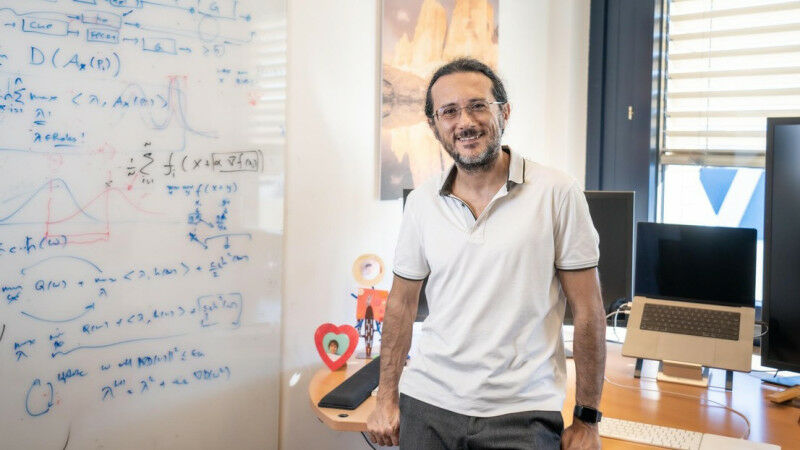

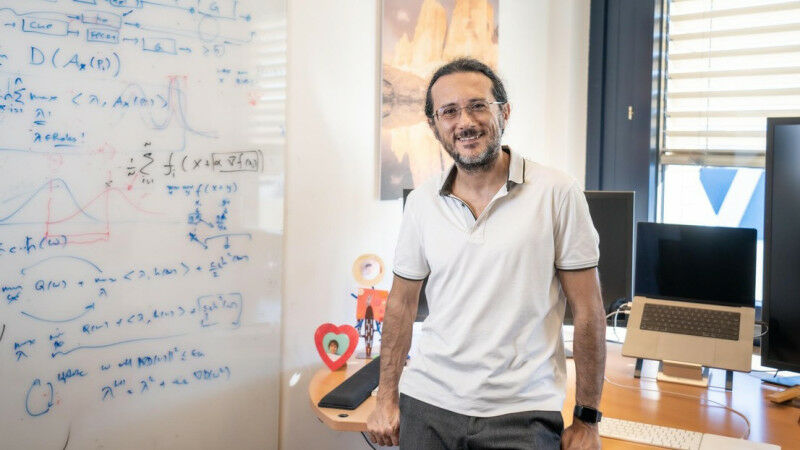

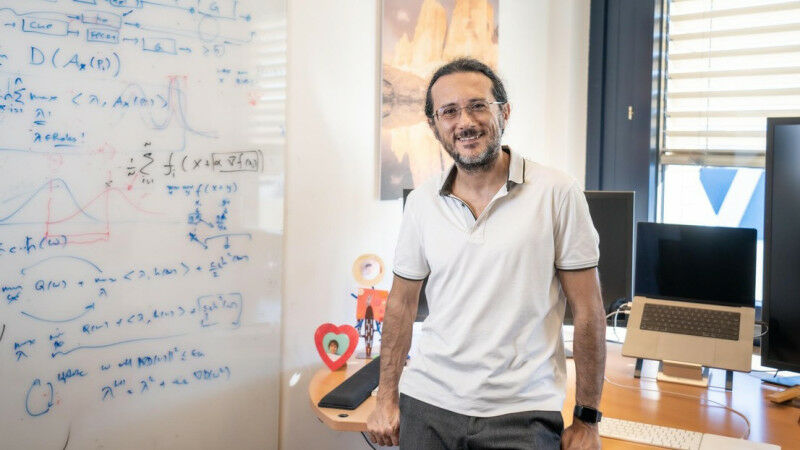

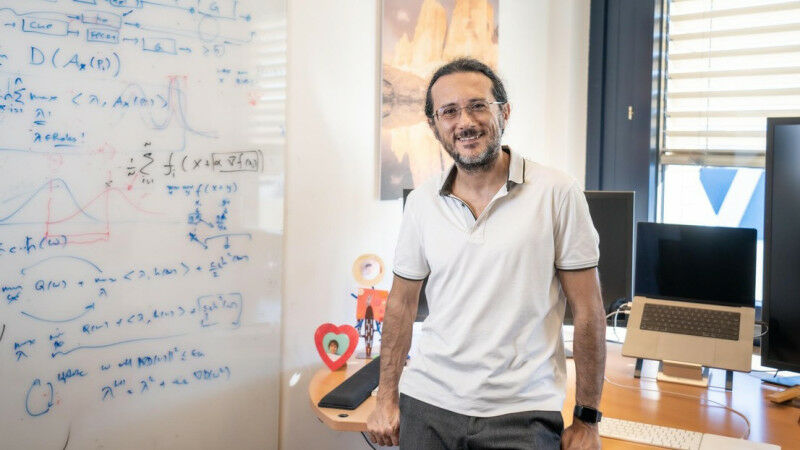

© 2023 Titouan Veuillet / EPFL Researchers at EPFL have uncovered a fundamental flaw in the training of machine learning systems and elaborated a new formulation for strengthening them against adversarial attacks. By completely rethinking the way that most Artificial Intelligence (AI) systems protect against attacks, researchers at EPFL's School of Engineering have developed a new training approach to ensure that machine learning models, particularly deep neural networks, consistently perform as intended, significantly enhancing their reliability. Effectively replacing a long-standing approach to training based on zero-sum game, the new model employs a continuously adaptive attack strategy to create a more intelligent training scenario. The results are applicable across a wide range of activities that depend on artificial intelligence for classification, such as safeguarding video streaming content, self-driving vehicles, and surveillance. The pioneering research was a close collaboration between the Laboratory for Information and Inference Systems ( LIONS ) at EPFL's School of Engineering and researchers the University of Pennsylvania (UPenn). In a digital world where the volume of data surpasses human capacity for full oversight, AI systems wield substantial power in making critical decisions. However, these systems are not immune to subtle yet potent attacks.

TO READ THIS ARTICLE, CREATE YOUR ACCOUNT

And extend your reading, free of charge and with no commitment.

Your Benefits

- Access to all content

- Receive newsmails for news and jobs

- Post ads