Researchers at Carnegie Mellon University are developing new technology that could lower how much energy data centers need to operate, reducing the strain on the energy grid that Americans rely on.

Why it matters

The electricity required to run data centers, specifically those powering artificial intelligence, is straining the U.S. energy grid. AI energy demands are projected to double or triple in the next few years, according to the U.S. Department of Energy, and could represent as much as 12% of the country’s total energy consumption by 2028.

The financial cost of rising energy demands is already driving up the utility bills of Americans across the country, according to the U.S. Energy Information Administration, an independent federal agency that collects and analyzes data about energy and the economy.

Designing energy-efficient servers

Akshitha Sriraman , an assistant professor of electrical and computer engineering in the College of Engineering , and her team are designing computer servers that require less energy over their lifecycle.

"We are computer architects and systems researchers, which means that we try to figure out how we can design the data center hardware devices in ways that are more efficient and sustainable," Sriraman said.

To do that, Sriraman’s team blends new and old technology by applying the sustainable resource management principles of reducing and reusing toward server hardware design.

"We call them ’carbon-efficient servers,’" she said. "Our new hardware design is able to run things more efficiently and it’s also more sustainable."

Balancing these environmental goals is a central part of Sriraman’s work. Her lab reuses older server components to delay them from being landfilled, and the new parts they incorporate are much more energy efficient.

"There’s a tradeoff," she said. "On the one hand, reusing older components might be less energy efficient. But if I’m keeping a component around longer, that means I don’t have to use energy to manufacture a new component."

Big tech companies are already adopting the technology. Sriraman said Microsoft is exploring the adoption of her designs for both internal and public cloud customers. As customer interest grows, she explained, Microsoft is considering her designs as an important strategy to meet Microsoft’s publicly stated 2030 decarbonization targets.

The impact is significant. The adoption of these efficient servers by large cloud companies can eliminate the annual carbon footprint of entire nations, according to Sriraman.

"These carbon-efficient servers’ widespread use can cut roughly 100 million of the 2.5 billion metric tons of carbon emissions that the cloud is projected to emit by 2030, equivalent to eliminating annual emissions from entire countries like Qatar or Venezuela," she said. "So that is a really nice result for us."

A new kind of chip

The processing chips that make computers run are made up of complex electrical circuits and can be thought of as the central command centers of the computer. For general purpose computing, there are two different types - CPUs and GPUs -- central processing units and graphics processing units. Making them more energy efficient can have a big impact.

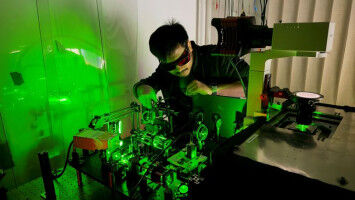

Brandon Lucia , an electrical and computer engineering professor, and Nathan Beckmann , an associate professor in the School of Computer Science ’s Department of Computer Science created a brand new type of chip architecture to do that. Their company, Efficient Computer, recently announced $60 million in new funding to expand the work.

"Efficient’s architecture is a fundamentally new type of processor for general purpose computing," said Lucia, Efficient Computer’s CEO. Traditional processors constantly retrieve instructions from memory, and move data around inside the chip frequently. These steps use a lot of energy and might be repeated billions of times per second. "We have a new way of mapping software into hardware, which eliminates the need to fetch new instructions cycle by cycle, and improves how data flows in the chip. We eliminate a huge energy sink."

The physical architecture of Efficient Computer’s new processor means it can move data and carry out instructions using just a fraction of the energy traditional CPUs or GPUs require.

"We are 10 times more energy efficient than the best low-power general purpose computers on the market today," Lucia said. "Meaning, if you hook ours up to a battery, hook theirs up to a battery, you just run a general purpose computation over and over - ours will last 10 times longer. A few weeks become years."

Working Overnights

What if AI data centers did their work when energy isn’t in demand?

Peter Zhang , assistant professor of operations research at Heinz College of Information Systems and Public Policy is exploring whether dynamic workload adjustments would help stabilize the electricity demand profiles of data centers, and what it would take to incentivize a shift to an overnight schedule. He hopes nocturnal data centers could reduce AI’s strain on the country’s aging energy grid.

Zhang’s proposal won the inaugural AI & Energy seed grant co-sponsored by The Scott Institute for Energy Innovation and The Block Center for Technology and Society.

Carnegie Mellon’s Wilton E. Scott Institute for Energy Innovation is leading the nation in facilitating discussion and driving action toward decarbonizing our energy economy. CMU’s Energy Week brings energy and sustainability leaders from across the nation to exchange ideas on the world’s most pressing energy issues.

Learn more

Work That Matters

Researchers at CMU are working on real world solutions to the biggest challenges.

Read more about the latest discoveries.